We are still waiting for the money promised by the state and the country for our HBFG (again, it’s “Hochschulbauförderungsgesetz”), that hopefully is reducing or eliminating our storage/SAN problem we have currently. Right now we have to Cisco MDS9216 (that’s a 16-port 2GBps SAN-switch, two for redundancy), which means we only have 16 SAN-ports. That isn’t much, but still is to less, as we have like 30 machines or so, that *really* need access to the SAN, so we either end up unplugging some of them from the SAN or merge them onto some big machines (like our x366).

The other side of the problem is the storage .. Currently that isn’t redundant, which means we’re fucked if the storage decides to not come up, or one of the controller smokes .. So were looking at two DS4700 with 2 enclosures each filled with 300GB 2GBps FC disks. That will hopefully also solve our constant lack of rackspace.

Apart from that, we took a look at the terminal server market, heard someone from Citrix, looked ourselves at 2X (and I think we are going with the 2X solution – even if they don’t support the authentication passthrough – yet). We might want to consider buying dedicated hardware for the terminal servers, as I implemented them running on the ESX which isn’t a permanent solution, as at least the students will work on those terminal servers 0700-2200, that means continuous load in that time, which isn’t good for the ESX Cluster, as they are pretty loaded already.

We’re also looking in buying a third box for the ESX Cluster, probably one of the same as we have currently (that is x366 – with 2 DC Xeon’s, 16GB RAM, 2×73 GB SAS, 2x dual-port Intel NIC, 2x dual-port FC HBA) to get some extra capacity.

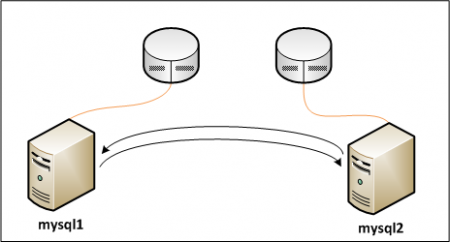

Recently I did some experiments with Gentoo as MySQL cluster (master< ->master replication for our upcoming database servers – that’s what the blade chassis and the two blades are for) and noticed that the Gentoo VM’s were sucking up RAM and didn’t release it, so I had to reset them every morning, in order to free some RAM. I guess I should poke Chris a bit about that, as he told me back at FOSDEM that he was doing some load testing with a similar setup not so far ago.